Knowledge Base

Articles In This Section

What is Generative AI What are Large Language Models (LLMs) What Is Artificial Intelligence (AI)?The Ultimate AI Glossary: 300+ Terms Every Leader Should KnowWhat is Specialized AI and Specialized AI Models?What Are the Main Types of AI? A Practical Guide for EnterprisesAgentic AI OverviewWhat is Specialized AI and Specialized AI Models?What is What is an AI Model and What are the Different Types?Sections

What is Model Context Protocol (MCP)

Model Context Protocol (MCP) is an open standard that allows AI models to securely connect to external tools, systems, and data sources in real time. It enables AI to retrieve context, execute actions, and interact with enterprise systems without requiring custom integrations.

This makes AI systems more scalable, secure, and production-ready for enterprise environments.

At its core, MCP separates AI reasoning from system connectivity. The AI model focuses on understanding intent and decision‑making, while MCP governs how the model safely accesses data, executes tools, and respects enterprise controls.

This separation is critical for scaling Generative AI across complex IT environments.

Why MCP Was Created?

MCP was created to solve several systemic problems that limited enterprise adoption of Generative AI.

- First, organizations needed a way to give AI access to real‑time, authoritative data without embedding sensitive information directly into prompts.

- Second, enterprises required consistent governance, and observability across AI interactions with business systems.

- Third, AI builders needed a reusable, scalable way to connect models to tools without rebuilding integrations for every new model or workflow.

How MCP Was Created?

MCP was created in response to the growing fragmentation in how AI models connected to tools and data. Early Generative AI implementations relied heavily on prompt engineering, custom plugins, or tightly coupled function calls that were brittle, difficult to govern, and expensive to maintain.

- As AI agents began to emerge that were capable of taking actions rather than simply generating text, the lack of a universal integration standard became a major constraint.

The protocol was introduced as an open standard to address this gap, drawing inspiration from successful technology abstractions such as device drivers, API gateways, and service meshes.

Its design reflects lessons learned from enterprise integration patterns, security frameworks, and automation platforms, making it particularly relevant for production‑grade AI deployments.

The Importance of MCP

MCP is important because it enables AI to operate as a first‑class participant in enterprise workflows.

Instead of acting as an isolated assistant, an MCP‑enabled model can retrieve live system data, trigger automations, update records, and collaborate with existing integration and automation platforms.

This is a critical shift for organizations investing in automation, integration, and GenAI convergence.

- For enterprise leaders, MCP reduces integration sprawl, lowers security risk, and accelerates time to value.

- For IT teams, it provides a standardized way to manage AI access alongside APIs, RPA bots, and iPaaS workflows.

- For the business, MCP unlocks AI‑driven processes that are adaptive, context‑aware, and continuously improving.

MCP Architecture and Core Components

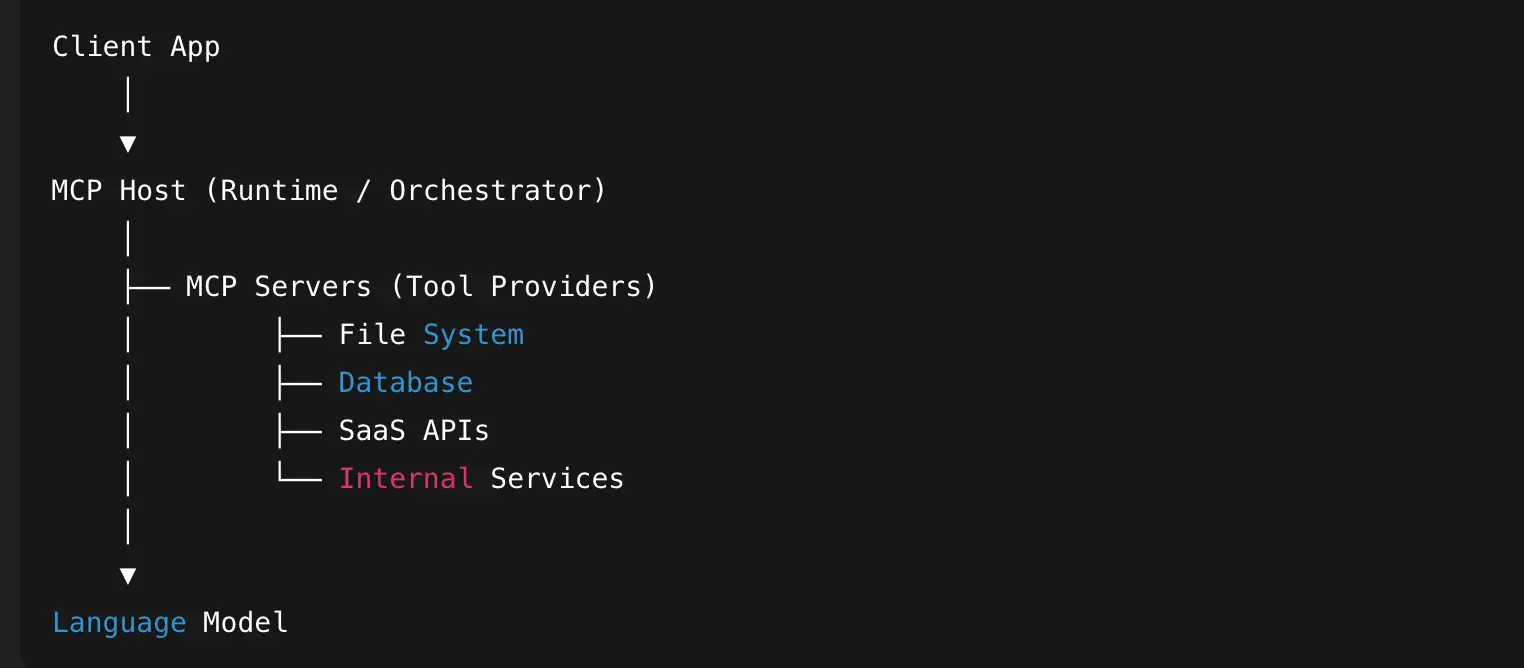

MCP is best understood as a layered architecture that cleanly separates AI reasoning, enterprise context, and system execution. This separation is what allows MCP to scale safely across complex enterprise environments while supporting multiple models, tools, and workflows.

MCP Architecture Overview (high-level)

At a high level, MCP sits between AI models and enterprise systems.

The AI model does not connect directly to databases, APIs, or applications. Instead, it interacts through MCP-defined interfaces that control what context can be accessed and what actions can be taken.

Model Context Protocol (MCP) is a standardized architecture for enabling AI models to securely access external tools, data sources, and systems through a consistent interface.

It separates:

- The model

- The context layer

- The tools/resources

- The client application

This enables modular, secure, and scalable AI integrations.

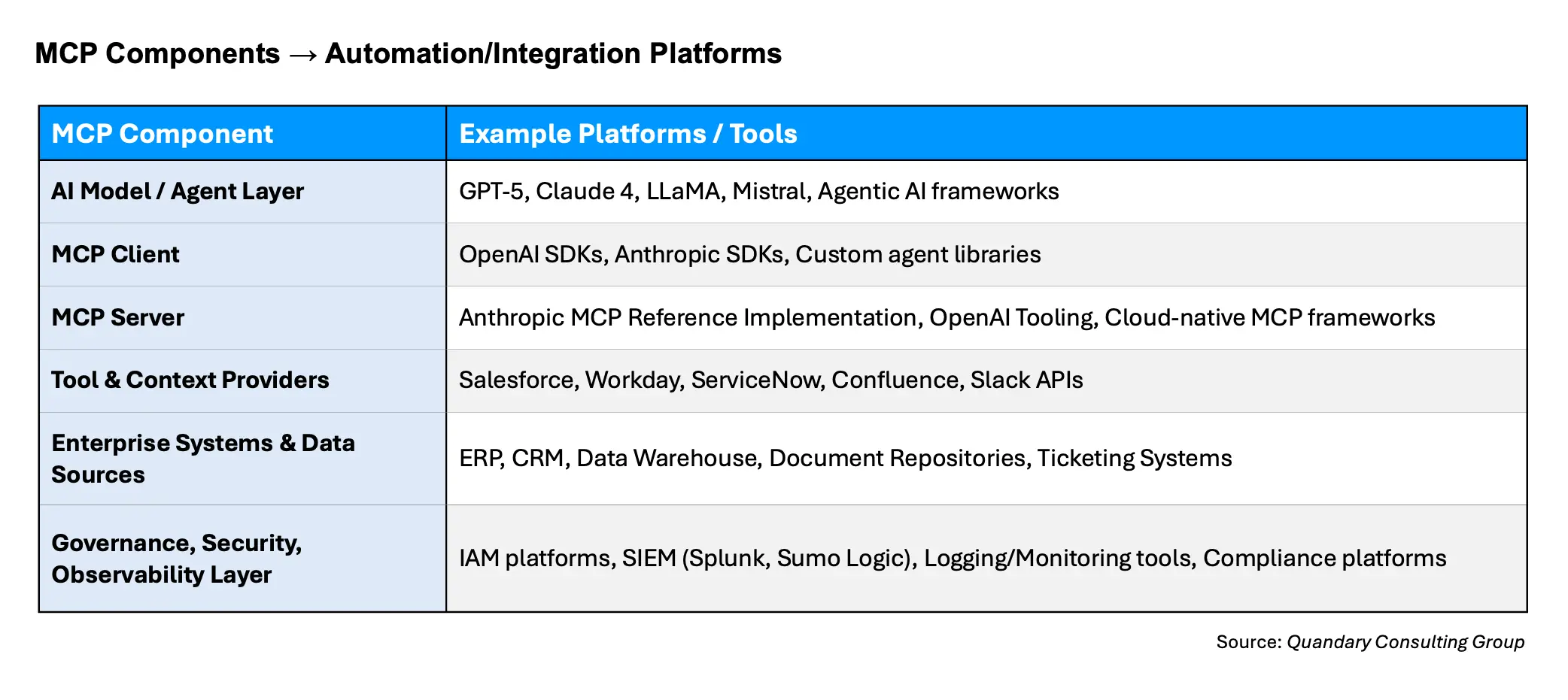

What are the Core Components of MCP

- AI Model or Agent Layer – Responsible for reasoning, planning, and decision-making.

- MCP Client – Translates model intents into MCP-compliant requests and handles structured responses.

- MCP Server – Core execution and control hub; enforces policies, validates requests, and manages communication with downstream systems.

- Tool and Context Providers – MCP-compliant connectors exposing specific capabilities with defined inputs, outputs, and permissions.

- Enterprise Systems and Data Sources – CRMs, ERPs, data warehouses, document repositories, ticketing systems, and other operational platforms.

- Governance, Security, and Observability Layer – Provides authentication, authorization, audit logging, monitoring, rate limiting, and integration with enterprise security tools.

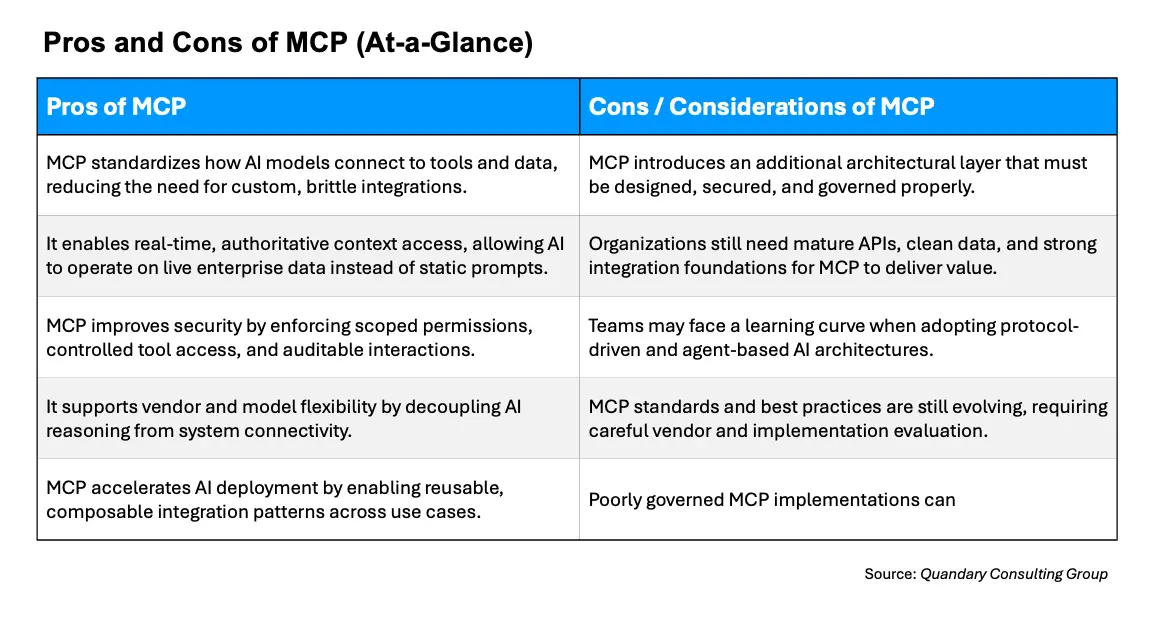

What are the Key Benefits and Risks of MCP

Benefits of MCP

MCP provides standardization across AI integrations, significantly reducing custom development effort.

Key benefits of MCP include:

- Standardized AI integrations

- Reduced development effort

- Improved security and governance

- Faster time to value

- Flexibility across models and tools

It improves security by enforcing scoped access, permissions, and auditability.

Risks of MCP

While powerful, MCP introduces additional architectural components that must be designed and governed properly.

Potential risks include:

- Increased architectural complexity

- Need for strong governance and security design

- Dependency on data quality and API maturity

- Evolving standards and best practices

Organizations should approach MCP as part of a broader integration and AI strategy.

Top MCP Implementations and Platforms

iPaaS‑Integrated MCP Solutions – Platforms that embed MCP concepts directly into automation and integration workflows, enabling AI‑driven orchestration.

- The best iPaaS‑Integrated MCP Solutions on the market right now is from Workato; the release of their pre-built MCP servers has taken the market by storm

- Quandary Consulting Group is a certified Workato partner,

- Qundary also carries certificates as an Onboarding Specialist Workato Partner and an Implementation Onboarding Workato Partner

Anthropic MCP Reference Implementation – A foundational, open implementation that defines the core protocol and serves as a baseline for vendors and builders.

OpenAI MCP‑Compatible Tooling – Widely adopted in GenAI ecosystems, enabling structured tool access and agent workflows at scale.

Cloud Provider MCP Frameworks – Emerging implementations within major cloud ecosystems that integrate MCP concepts with native security and identity controls.

Custom Enterprise MCP Gateways – Purpose‑built implementations designed for highly regulated or complex environments where control and observability are paramount.

What Happens When an AI Agent Uses MCP?

When an AI agent uses Model Context Protocol (MCP), something subtle but powerful happens: the agent stops being a standalone language model and starts behaving like a connected system. Instead of relying only on its training data, it can dynamically discover tools, access live data, and execute structured actions in real time. The result is a shift from “generate text” to “orchestrate outcomes.”

But what actually happens under the hood?

- User or System Request – A business user or automated process initiates a request that requires AI reasoning and action.

- AI Model Processes Request – The AI model or agent determines the necessary steps and formulates a plan.

- MCP Client Request – The AI model communicates via the MCP client, issuing structured requests to the MCP server.

- MCP Server Validation – The server validates the request, enforces access policies, checks permissions, and identifies relevant tool/context providers.

- Tool or Data Access – MCP invokes the appropriate connectors to retrieve data or execute actions against enterprise systems.

- Response Handling – Results from the enterprise systems are returned through the MCP server and client back to the AI model.

- Decision or Action Completion – The AI model interprets results, may perform additional steps, and completes the intended workflow.

- Logging and Monitoring – All interactions are logged, monitored, and audited through the governance and observability layer for compliance and performance tracking.

Common MCP Architecture Mistakes to Avoid

Bypassing Security and Governance Layers – Directly connecting AI models to systems without MCP controls can create compliance and risk exposure.

Tightly Coupling Models to Tools – Hard-coding integrations reduces flexibility, increases maintenance burden, and hinders scaling.

Ignoring Observability – Failing to log and monitor interactions limits auditability, troubleshooting, and performance optimization.

Underestimating Data Quality Needs – MCP enables access but does not solve poor data hygiene; garbage in results in garbage out.

Neglecting Rate Limits and API Constraints – Overloading enterprise systems can occur without proper throttling and validation.

Overcomplicating Architecture – Adding unnecessary layers or connectors can introduce latency and operational complexity.

MCP Client and MCP Server Ecosystem

Since its introduction in late 2024, MCP has experienced explosive growth.

Some MCP marketplaces claim nearly 16,000+ unique servers at the time of writing, but the real number (including those that aren’t made public) could be considerably higher.

Examples of MCP Clients:

- Claude Desktop: The original, first-party desktop application with comprehensive MCP client support

- Claude Code: Command-line interface for agentic coding, complete with MCP capabilities

- Cursor: The premier AI-enhanced IDE with one-lick MCP server installation

- Windsurf: Previously known as Codeium, an IDE with MCP support through the Cascade client

- Continue: Open-source AI coding companion for JetBrains and VS Code

- Visual Studio Code: Microsoft’s IDE, which added MCP support in June 2025

- JetBrains IDEs: Full coding suite that added AI Assistant MCP integration in August 2025

- Xcode: Apple’s IDE, which received MCP support through GitHub Copilot in August 2025

- Eclipse: Open-source IDE with MCP support through GitHub Copilot as of August 2025

- Zed: Performance-focused code editor with MCP prompts as slash commands

- Sourcegraph Cody: AI coding assistant implementing MCP through OpenCtx

- LangChain: Framework with MCP adapters for agent development

- Firebase Genkit: Google’s AI development framework with MCP support

- Superinterface: Platform for adding in-app AI assistants with MCP functionality

Notably, IDEs like Cursor and Windsurf have turned MCP server setup into a one-click affair. This dramatically lowers the barrier for developer adoption, especially among those already using AI-enabled tools.

However, consumer-facing applications like Claude Desktop still require manual configuration with JSON files, highlighting an increasingly apparent gap between developer tooling and consumer use cases.

What are the Best MCP Servers

The MCP ecosystem comprises a diverse range of servers including reference servers (created by the protocol maintainers as implementation examples), official integrations (maintained by companies for their platforms), and community servers (developed by independent contributors).

These servers, maintained by MCP project contributors, include fundamental integrations like:

Git

This server offers tools to read, search, and manipulate Git repositories via LLMs.

While relatively simple in its capabilities, the Git MCP reference server provides an excellent model for building your own implementation.

Filesystem

Node.js server that leverages MCP for filesystem operations: reading/writing, creating/deleting directories, and searching.

The server offers dynamic directory access via Roots, a recent MCP feature that outlines the boundaries of server operation within the filesystem.

Fetch

This MCP server provides web content fetching capabilities. This server converts HTML to markdown for easier consumption by LLMs.

- This allows them to retrieve and process online content with greater speed and accuracy.

Strategic Takeaway for Enterprise Leaders

MCP is not just a technical protocol; it is a strategic enabler for operational AI. It provides the missing link between GenAI intelligence and enterprise execution.

Organizations that adopt MCP thoughtfully can move faster, scale safer, and integrate AI more deeply into their core business processes.

From Quandary Consulting Group’s perspective, an MCP should be evaluated as part of a broader automation and integration roadmap.

When combined with strong process design, modern integration platforms, and responsible AI governance, MCP becomes a catalyst for transforming how enterprises work, decide, and innovate.

Top FAQs for Model Context Protocol (MCP)

What is Model Context Protocol (MCP)?

Model Context Protocol (MCP) is an open standard that allows AI models to securely connect to external tools, systems, and data sources in real time. It enables AI to retrieve context, execute actions, and interact with enterprise systems without requiring custom integrations.

This makes AI systems more scalable, secure, and production-ready for enterprise environments.

Why is MCP important for enterprise AI?

MCP is important because it transforms AI from a standalone tool into a connected system that can take action inside business workflows.

For enterprises, this means:

- Faster AI deployment

- Reduced integration complexity

- Improved governance and security

- Real-time access to business data

At Quandary Consulting Group, we see MCP as a key enabler for scaling AI beyond proof-of-concept into production.

How does MCP work?

MCP works as a standardized layer between AI models and enterprise systems.

Instead of directly accessing APIs or databases, AI models:

- Send structured requests through an MCP client

- MCP server validates permissions and policies

- Tools and systems are securely accessed

- Results are returned to the AI for decision-making

This architecture ensures secure, controlled, and auditable AI interactions.

What problems does MCP solve?

MCP solves several major challenges in enterprise AI:

- Eliminates custom, one-off integrations

- Enables secure access to real-time data

- Standardizes how AI interacts with tools

- Improves governance, observability, and compliance

It helps organizations move from experimental AI to scalable, operational AI systems.

What is the difference between MCP and APIs?

APIs allow systems to communicate, but MCP provides a standardized framework for how AI models use those APIs.

Key difference:

- API: Defines endpoints for data/services

- MCP: Defines how AI discovers, accesses, and uses those endpoints securely

MCP sits on top of APIs to make them usable by AI agents in a consistent way.

What are the core components of MCP architecture?

MCP includes several key components:

- AI Model / Agent: Handles reasoning and decision-making

- MCP Client: Translates model intent into structured requests

- MCP Server: Enforces security, validation, and orchestration

- Tool Providers: Expose capabilities (APIs, workflows, data)

- Enterprise Systems: CRM, ERP, databases, etc.

- Governance Layer: Security, logging, monitoring, and compliance

This layered approach enables scalable and secure AI deployments.

What are examples of MCP use cases?

Common MCP use cases include:

- AI agents retrieving CRM data in real time

- Automating workflows across ERP and ticketing systems

- AI-driven customer support actions (not just responses)

- Financial operations automation with audit trails

- Intelligent document processing and decisioning

These use cases shift AI from content generation to action execution.

Is MCP only for large enterprises?

While MCP is especially valuable for large enterprises with complex systems, it can benefit any organization that:

- Uses multiple systems or tools

- Needs secure AI integrations

- Wants to scale AI across workflows

However, its impact is greatest in enterprise environments with high integration complexity.

What are the benefits of MCP?

Key benefits of MCP include:

- Standardized AI integrations

- Reduced development effort

- Improved security and governance

- Faster time to value

- Flexibility across models and tools

It also reduces vendor lock-in by allowing multiple AI models to use the same interfaces.

What are the risks of MCP?

Potential risks include:

- Increased architectural complexity

- Need for strong governance and security design

- Dependency on data quality and API maturity

- Evolving standards and best practices

Organizations should approach MCP as part of a broader integration and AI strategy.

How does MCP improve AI security?

MCP improves security by:

- Enforcing access controls and permissions

- Providing audit logs for all AI actions

- Preventing direct model access to sensitive systems

- Enabling centralized governance

This ensures AI operates within enterprise security frameworks.

What is an MCP server?

An MCP server is the central control layer that:

- Validates requests from AI models

- Enforces policies and permissions

- Connects to tools and enterprise systems

- Logs and monitors interactions

It acts as the gatekeeper between AI and business systems.

What is an MCP client?

An MCP client translates AI model intent into structured requests that the MCP server can process.

It ensures that communication between the model and systems follows the MCP standard.

How is MCP used in AI agents?

MCP enables AI agents to:

- Discover available tools dynamically

- Access real-time data

- Execute structured actions

- Iterate on results

This allows agents to move beyond answering questions to completing tasks and workflows.

What companies or platforms support MCP?

MCP is supported or aligned with:

- Anthropic (reference implementation)

- OpenAI ecosystems (tooling and agent frameworks)

- Cloud providers (emerging MCP-style architectures)

- Integration platforms like Workato (iPaaS, automation tools)

Adoption is rapidly growing across the AI ecosystem.

How do companies get started with MCP?

To get started with MCP:

- Identify AI use cases that require system interaction

- Evaluate existing APIs and integration maturity

- Design governance and security frameworks

- Implement MCP-compatible tools and architecture

- Start with pilot workflows and scale

Working with experts like Quandary Consulting Group (Denver, Colorado) can accelerate adoption and reduce risk.

Where can I find MCP consulting or implementation services?

If you're looking for Model Context Protocol (MCP) consulting in Denver, Colorado or across the United States, Quandary Consulting Group helps enterprises design, implement, and scale MCP-enabled AI systems securely and efficiently.

- By: Kevin Shuler

- Title: CEO

- Email: kevin@quandarycg.com

- Date updated: 01/02/2026

Industries

Resources

© 2026 Quandary Consulting Group. All Rights Reserved.

Privacy Policy