Knowledge Base

Articles In This Section

Quickbase Login Issues: Common Causes and Troubleshooting GuideHow to Optimize your Quickbase for Faster PerformanceSections

How to Optimize Storage and Archiving for High-Volume Quickbase Environments

As organizations scale their use of Quickbase, data volume grows quickly—often exceeding original application design assumptions. In high-usage Quickbase environments, this rapid growth can lead to increased memory consumption, slower performance, and reduced system maintainability.

The good news: these challenges are common, predictable, and solvable with the right approach.

By implementing proven Quickbase storage optimization and data archiving strategies, organizations can maintain strong application performance, reduce infrastructure costs, and ensure long-term scalability.

This guide provides a practical, experience-driven framework for optimizing storage and archiving in large-scale Quickbase deployments. You’ll learn how to manage growing datasets, improve performance, and extend the lifecycle of your Quickbase applications without compromising reliability.

What Is Considered Heavy Quickbase Usage?

Heavy Quickbase usage typically refers to applications that manage large volumes of data, support complex workflows, and operate as critical systems within an organization. As Quickbase environments scale—especially in enterprise and high-growth organizations—usage patterns can place increasing demands on performance, storage, and system reliability.

While definitions may vary by organization, the following characteristics are strong indicators of a high-usage Quickbase environment:

Long-Term Quickbase Implementations

Applications that store and manage multi-year datasets (5–15+ years of operational data), often without regular archiving or data lifecycle management.

High-Volume Quickbase Data

Databases containing hundreds of thousands to millions of records, which can impact query speed, reporting performance, and overall application responsiveness.

Extensive File Attachment Usage

Frequent use of Quickbase file attachments and embedded content, including:

- Images (JPG, PNG, GIF, SVG): Used for logos, photos, and UI elements in formula fields

- Video & Audio (MP4, MOV, MP3): Embedded or streamed within applications

- Documents (PDF, DOCX, XLSX, PPTX): Stored in file attachment fields for operational use

- Embedded Content: Integrations with platforms like YouTube, Vimeo, or other third-party tools via links or iframe embeds

Automations, Pipelines, and Integrations

Heavy reliance on Quickbase Pipelines, APIs, and third-party integrations, often running frequently and across multiple applications.

Business-Critical Workflows

Applications that support core operational processes, where performance issues directly impact business outcomes, user productivity, and system reliability.

What Are Quickbase Ecosystem Storage Constraints?

Quickbase storage constraints refer to the practical limits and performance considerations that emerge as applications scale in data volume, complexity, and usage.

In high-usage Quickbase environments—especially within growing U.S. enterprises and data-intensive organizations—these constraints can directly impact application speed, reliability, and long-term scalability.

As record counts increase, file attachments accumulate, and workflows become more complex, Quickbase applications may experience slower performance, increased load times, and reduced maintainability if storage is not actively managed.

Understanding how Quickbase handles data at both the table and application level is essential for optimizing performance and preventing system bottlenecks.

Key Quickbase Storage Constraints to Consider

Table Storage Limits and Record Growth

As Quickbase tables grow to hundreds of thousands or millions of records, performance can degrade—particularly in queries, form loads, and relationships between tables. Without proper data management or archiving, large tables can slow down even well-designed applications.

Application-Level Storage Considerations

At the app level, cumulative data—including records, attachments, and relationships—can strain overall system performance. Large, complex Quickbase applications require intentional architecture and data segmentation to remain scalable and efficient.

Attachment Size and Volume Impact

Heavy use of file attachments in Quickbase—including documents, images, and embedded media—can significantly increase storage consumption. High attachment volume not only affects storage limits but can also slow down record retrieval and user experience.

Performance Impact of Large Datasets

Large datasets can affect:

- Report load times

- Form rendering speed

- API response performance

- User experience across the application

As data volume increases, even small inefficiencies in design can compound into noticeable performance issues.

Reporting, Formulas, and Pipeline Sensitivity

Quickbase reports, formula fields, and Pipelines become more resource-intensive as data grows. Complex formulas, large reports, and frequently triggered automations may:

- Take longer to execute

- Fail under heavy load

- Create bottlenecks in business-critical workflows

Data Lifecycle Management in Quickbase: Active vs. Historical Data

Data lifecycle management in Quickbase is the practice of organizing, managing, and optimizing data as it moves through different stages of its lifecycle. In high-usage Quickbase environments—particularly in enterprise and data-driven organizations—this typically involves separating active (operational) data from historical data to maintain performance, reduce storage costs, and support long-term scalability.

As Quickbase applications grow, failing to distinguish between these data types can lead to slower performance, increased storage consumption, and unnecessary complexity. Implementing a clear data lifecycle strategy ensures that applications remain efficient, compliant, and easy to maintain.

Key Data Types in Quickbase Lifecycle Management

Active Data (Operational Data)

Active data refers to frequently accessed and regularly updated records that support day-to-day business operations. This data is critical for real-time workflows, reporting, and user interactions.

Common characteristics of active Quickbase data:

- Frequently viewed, edited, or updated

- Powers core business processes and workflows

- Used in reports, dashboards, and automations

- Requires fast performance and low latency

Historical Data

Historical data consists of records that are no longer needed for daily operations but must be retained for compliance, auditing, reporting, or long-term analysis.

Common characteristics of historical Quickbase data:

- Rarely accessed or modified

- Stored for regulatory compliance or internal policies

- Used for audits, trend analysis, or record-keeping

- Ideal candidates for archiving or offloading to external storage

Why This Distinction Matters

Separating active and historical data in Quickbase helps organizations:

- Improve application performance by reducing dataset size

- Optimize storage usage and costs

- Simplify reporting and data management

- Maintain compliance with data retention policies

What are the Internal Storage Options for Quickbase Archiving

Internal Quickbase archiving methods provide a structured way to manage data growth within the platform by relocating inactive or closed records out of primary operational tables. Outlined below are some common methods to archive data that you have identified and classified as 'historical data' that leverages only native Quickbase capabilities:

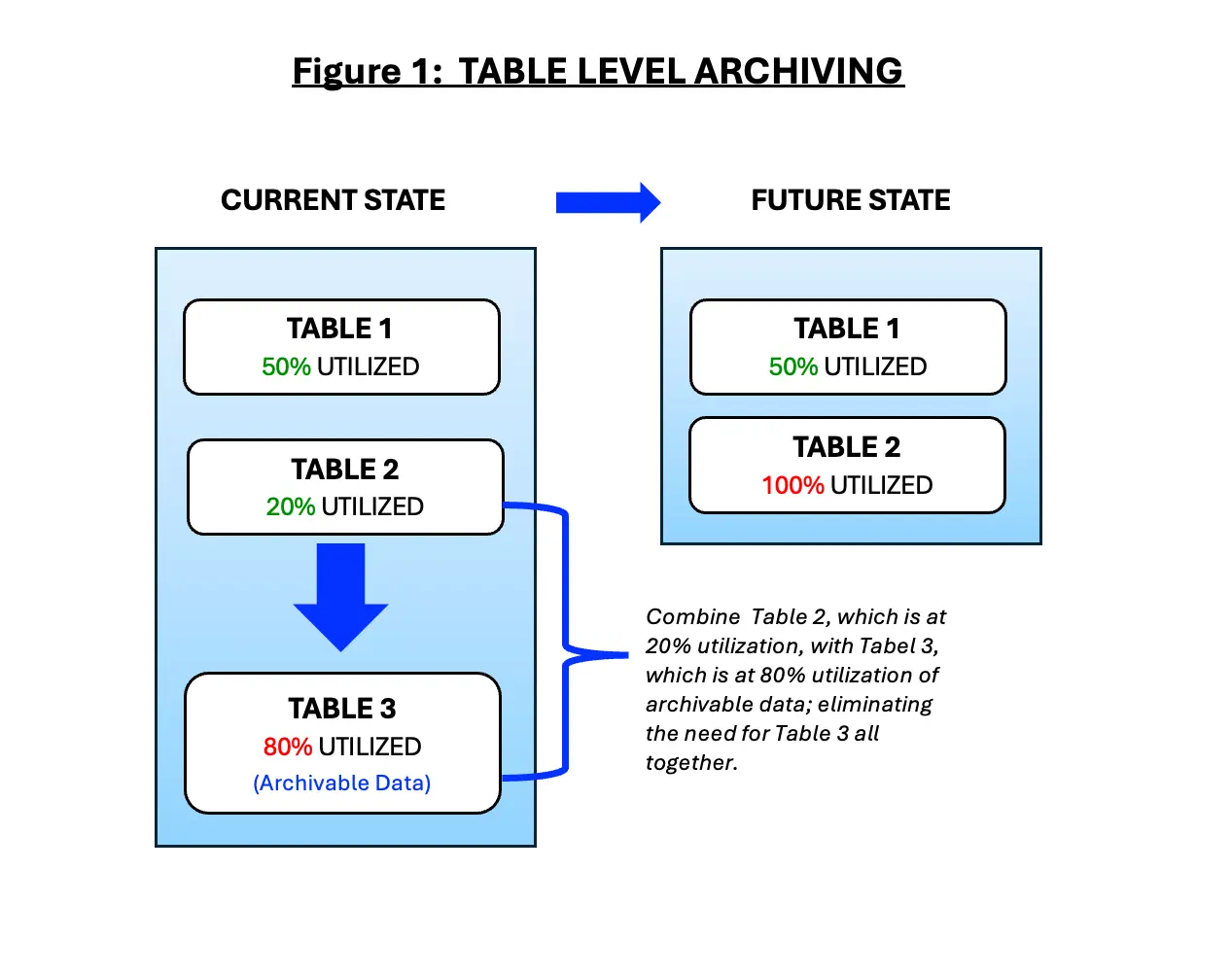

1. Table-Level Archiving (figure 1)

- Moving closed or inactive records to archive tables

- Best for moderate growth scenarios

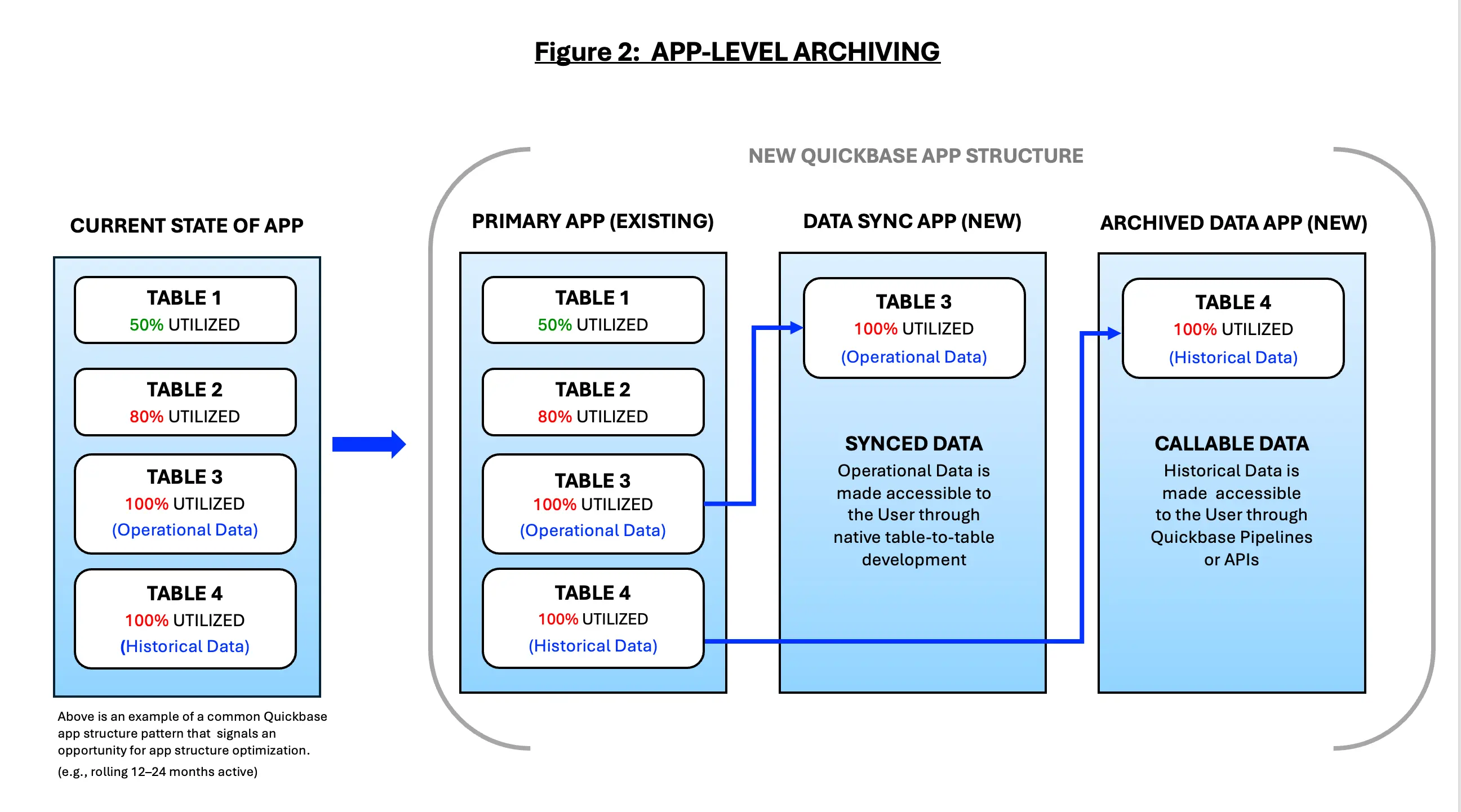

2. App-Level Archiving (figure 2)

- Separating active and historical data into distinct apps

- Read-only historical apps

- Security, permissions, and performance benefits

Why Automate Data Archiving in Quickbase with Pipelines

Automating data archiving in Quickbase using Pipelines allows organizations to efficiently manage data growth, maintain application performance, and enforce data lifecycle policies at scale. In high-usage Quickbase environments—especially across enterprise and data-intensive organizations—manual data management quickly becomes unsustainable.

By leveraging Quickbase Pipelines automation, organizations can automatically move inactive or completed records out of operational tables based on predefined rules such as record age, status, lifecycle stage, or activity history. This reduces table size, improves performance, and ensures users only interact with relevant, active data.

Automation not only preserves system responsiveness but also supports compliance, auditing, and long-term data retention strategies—without disrupting day-to-day business operations.

Key Benefits of Automated Archiving in Quickbase

- Improves application performance by reducing active dataset size

- Optimizes storage usage and costs

- Ensures consistent data lifecycle management

- Supports compliance and audit requirements

- Reduces manual administrative effort

Common Quickbase Pipeline Archiving Strategies

Volume-Based Archiving Triggers

Automatically archive records when a table exceeds a defined record count or growth threshold, preventing performance degradation before it impacts users.

Inactivity-Driven Archiving

Move records that have not been viewed or updated within a set timeframe (e.g., 12–24 months) to archive tables, ensuring operational tables contain only active data.

Process-Completion Archiving

Archive records once workflows are complete (e.g., billing finalized, approvals closed, integrations completed), reducing clutter without interrupting active processes.

Multi-Stage Archiving Workflows

Implement staged transitions such as:

Active → Read-Only → Archived

This allows for controlled retention periods and audit windows before full archival.

Selective Field Preservation

Retain only essential data (e.g., record IDs, dates, financial totals, audit fields) while removing high-volume or non-critical fields to reduce storage consumption.

Cross-Table Archival Coordination

Archive parent and related child records together to maintain relational integrity and ensure accurate historical reporting.

Conditional Attachment Offloading

Move large or infrequently accessed file attachments to external storage solutions (e.g., cloud repositories) while maintaining reference links in Quickbase.

Compliance-Driven Retention Rules

Apply archiving logic based on regulatory or contractual requirements, such as retaining financial records for seven years or operational logs for shorter periods.

Scheduled Batch Archiving

Run archiving Pipelines during off-peak hours to minimize impact on system performance and user experience.

Audit Logging and Traceability

Automatically log archiving actions (e.g., date, rule applied, Pipeline execution ID) to support governance, compliance audits, and troubleshooting.

Key Quickbase Storage Considerations for Governance, Security, and Compliance

As Quickbase environments scale, storage decisions impact more than just performance and cost—they play a critical role in data governance, security, and regulatory compliance. In high-usage Quickbase environments, especially within enterprise and regulated industries (e.g., healthcare, financial services, and SaaS), improper storage management can introduce risk, limit visibility, and create compliance gaps.

A well-defined Quickbase storage strategy ensures that data is properly classified, securely managed, and retained in accordance with business and regulatory requirements—whether stored within Quickbase or in external systems.

Key Governance Considerations for Quickbase Storage

Data Ownership and Stewardship

Clearly define ownership of operational data, archived data, and externally stored assets to prevent orphaned records, inconsistent management, and uncontrolled data growth.

Data Classification and Tiering

Classify data based on sensitivity, business value, and usage frequency to determine appropriate storage locations (e.g., active tables, archive tables, external storage).

Data Lifecycle and Retention Policies

Establish formal policies that define:

- How long data remains active

- When it is archived

- When it is eligible for deletion

These policies should align with legal, regulatory, and internal business requirements.

Change Management and Oversight

Govern Quickbase Pipelines, automations, and integrations that move or archive data. Ensure all changes are reviewed, tested, and documented to minimize risk.

Auditability and Traceability

Maintain detailed logs of data movement, archiving actions, and deletions to support internal governance and external audits.

Key Security Considerations for Quickbase Storage

Access Control Alignment

Ensure user permissions in Quickbase are consistent with access controls in external storage platforms to prevent unauthorized access to sensitive or archived data.

Least-Privilege Access

Apply least-privilege principles, granting users access only to the data necessary for their role—especially for historical or sensitive datasets.

Encryption Standards

Verify that all data is encrypted in transit and at rest, including files and attachments stored outside of Quickbase.

Secure Integration Patterns

Use approved connectors, service accounts, and credential management practices when integrating Quickbase with external storage systems.

Data Loss Prevention (DLP)

Implement monitoring and controls to detect and prevent unauthorized data movement, particularly when transferring files to external repositories.

Key Compliance Considerations for Quickbase Storage

Regulatory Data Retention Requirements

Align storage and archiving practices with regulations such as:

- SOX (Sarbanes-Oxley)

- HIPAA (Healthcare)

- GDPR (Data Privacy)

- Industry-specific compliance mandates

Industry-Specific Guidance

- Healthcare organizations: Follow HIPAA-aligned data storage and retention practices

- Financial services organizations: Consult compliance teams before archiving or moving records to ensure alignment with FINRA Rule 4511 and SEC Rules 17a-3 / 17a-4

Right to Access and Right to Erasure

Ensure systems can retrieve, export, or delete data in response to regulatory or legal requests (e.g., GDPR data subject rights).

Data Residency and Sovereignty

Understand where data is physically stored, especially when using cloud or multi-region storage solutions, to meet jurisdictional requirements.

Legal Hold and eDiscovery Support

Implement controls that prevent deletion or modification of records under legal hold due to litigation or investigation.

Third-Party Risk Management

Evaluate external storage providers for:

- Security certifications (e.g., SOC 2, ISO 27001)

- Service level agreements (SLAs)

- Incident response and breach notification processes

What are Key Quickbase Performance and Cost Optimization Benefits

Implementing a strategic data archiving and storage optimization approach in Quickbase delivers measurable improvements in application performance, cost efficiency, and operational reliability. In high-usage Quickbase environments—especially within enterprise and data-intensive organizations—proactive data management is not just a technical best practice; it is a critical business initiative.

By actively managing data across its lifecycle, organizations can reduce system strain, improve user experience, and create a scalable foundation for long-term Quickbase success.

Key Benefits of Quickbase Storage and Performance Optimization

Faster Reports and Dashboards

Reducing the volume of active data in Quickbase tables allows queries, reports, and formula calculations to execute more efficiently.

As a result:

- Report load times improve

- Dashboards become more responsive

- Users can access insights faster without delays

This leads to improved productivity and greater confidence in reporting accuracy.

More Reliable Pipelines and Integrations

Smaller, well-structured datasets reduce the processing burden on Quickbase Pipelines, APIs, and third-party integrations.

Benefits include:

- More consistent automation performance

- Fewer pipeline errors and failures

- Easier monitoring and troubleshooting of integrations

Reduced Operational Risk

Unmanaged data growth increases the likelihood of:

- Hitting Quickbase table or storage limits

- Experiencing performance bottlenecks

- Encountering failed automations or outages

A proactive archiving strategy mitigates these risks by controlling data volume and preventing system strain before it impacts operations.

Controlled Storage Growth and Cost Savings

Separating active and historical data helps organizations:

- Slow storage consumption

- Plan capacity more effectively

- Avoid unexpected platform limitations

This leads to more predictable Quickbase storage costs and licensing usage, reducing the need for reactive or emergency scaling decisions.

Improved User Experience, Trust, and Adoption

Fast, reliable applications drive higher user engagement and adoption. By archiving outdated or irrelevant data:

- Interfaces remain clean and focused

- Users interact only with relevant, active records

- System usability and satisfaction improve

This reinforces Quickbase as a trusted system of record, rather than a cluttered data repository.

What are the External Storage Strategies for Heavy Quickbase Usage

As Quickbase applications scale, file attachments and high-volume assets often become the primary drivers of storage consumption and performance constraints. In high-usage Quickbase environments—especially within enterprise and data-intensive organizations—relying solely on native storage can lead to slower performance, increased costs, and reduced scalability.

External storage strategies solve this challenge by separating structured transactional data from large or infrequently accessed files. By offloading documents, images, and media to purpose-built storage platforms—while maintaining secure references and metadata within Quickbase—organizations can significantly improve performance, reduce storage costs, and build a more scalable data architecture.

What are the Recommended External Storage Solutions for Quickbase

Amazon S3

A highly scalable cloud object storage solution ideal for storing large volumes of files, exports, and attachments. Features include lifecycle policies for cost optimization and long-term retention.

Microsoft SharePoint / OneDrive

Best suited for organizations using Microsoft 365, offering strong version control, permissions alignment, and seamless document collaboration.

Google Drive

A flexible, user-friendly option for teams needing easy file access and sharing, often paired with automation tools to manage permissions and folder structures.

Azure Blob Storage

Ideal for enterprises operating within the Microsoft Azure ecosystem, with robust security, scalability, and integration with analytics and archiving workflows.

Box

Widely used in regulated industries due to advanced governance controls, security features, and compliance certifications.

Enterprise File Servers / Network Drives

Used when organizations require on-premise or controlled infrastructure storage, often to meet strict regulatory or internal data policies.

Content Management Systems (CMS)

Platforms like Alfresco or OpenText provide advanced document lifecycle management, making them suitable for organizations with complex compliance and governance requirements.

Common Quickbase Data Storage and Archiving Pitfalls to Avoid

As Quickbase data grows, many organizations attempt quick fixes for storage and performance issues. However, poorly designed archiving strategies can introduce data integrity risks, reporting gaps, and compliance issues.

Treating Archiving as a One-Time Cleanup

Archiving should be an ongoing data lifecycle process, not a one-time effort. Without continuous governance, data growth will quickly recreate the same performance and storage challenges.

Over-Archiving Active or Needed Data

Aggressive archiving can remove data still required for reporting, audits, or daily operations, leading to broken dashboards and user frustration. Not all older data is inactive—distinguishing between inactive vs. low-frequency data is critical.

Ignoring Attachment Growth

Attachments are one of the fastest-growing storage drivers in Quickbase. Focusing only on record counts while ignoring files can undermine archiving efforts and lead to late-stage, disruptive remediation.

Lack of Rollback or Recovery Planning

Archiving without a recovery strategy introduces significant risk. Mature approaches include:

- Staging tables

- Retention windows before deletion

- Backup and rollback procedures

These safeguards ensure data can be restored if needed.

How to Build a Long-Term Quickbase Archiving Strategy

A long-term Quickbase archiving roadmap is essential for maintaining performance, scalability, and governance as data volumes grow. Without a clear strategy, applications can become bloated, slow, and costly to maintain.

A well-designed approach ensures:

- Active data remains fast and accessible

- Historical data is retained appropriately for reporting and compliance

- Storage growth is controlled and predictable

Most importantly, it shifts archiving from a reactive cleanup effort to a proactive data lifecycle strategy, enabling organizations to scale confidently.

Designing Quickbase for longevity requires proactive data management, including archiving, external storage, and governance. This ensures performance, scalability, and compliance as data volumes grow.

Top FAQs: Quickbase Storage, Archiving, and Performance Optimization

1. What is the best way to manage large data volumes in Quickbase?

The best way to manage large data volumes in Quickbase is to implement a data lifecycle management strategy that separates active data from historical data. This includes using archiving techniques, external storage solutions (like Amazon S3 or SharePoint), and automation via Quickbase Pipelines. These practices improve performance, reduce storage costs, and ensure scalability in high-usage Quickbase environments.

2. When should you archive data in Quickbase?

You should archive data in Quickbase when records are no longer actively used in daily operations but still need to be retained for reporting, compliance, or audit purposes. Common triggers include record age (e.g., 12–24 months), process completion, or inactivity thresholds. Proactive archiving helps maintain performance and prevent storage-related issues.

3. How do Quickbase storage limits affect performance?

Quickbase storage limits—such as large record counts, high attachment volumes, and complex data relationships—can slow down reports, dashboards, pipelines, and API performance. As data grows, applications may experience longer load times and reduced responsiveness. Optimizing storage through archiving and external file management helps maintain consistent performance.

4. What are the benefits of using external storage with Quickbase?

Using external storage with Quickbase allows organizations to offload large files and attachments to platforms like Amazon S3, Microsoft SharePoint, or Azure Blob Storage. This reduces table size, improves application speed, lowers storage costs, and supports scalable architecture—especially for enterprise and data-intensive organizations.

5. What is considered “heavy” Quickbase usage?

Heavy Quickbase usage typically includes applications with hundreds of thousands to millions of records, multi-year data retention (5–15+ years), high attachment usage, and frequent pipelines or integrations. These environments require proactive performance optimization and storage management to remain efficient and scalable.

6. How do Quickbase Pipelines help with data archiving?

Quickbase Pipelines automate data archiving by moving records based on rules like age, status, or inactivity. This reduces manual effort, ensures consistent data lifecycle management, and improves system performance by keeping operational tables lean and efficient.

7. What are common mistakes when archiving Quickbase data?

Common Quickbase archiving mistakes include:

- Treating archiving as a one-time cleanup instead of an ongoing process

- Archiving data that is still needed for operations or reporting

- Ignoring attachment storage growth

- Not having a rollback or recovery plan

Avoiding these pitfalls ensures better performance, data integrity, and long-term scalability.

8. How can you reduce Quickbase storage costs?

To reduce Quickbase storage costs, organizations should:

- Archive inactive records regularly

- Offload large attachments to external storage

- Remove unnecessary or duplicate data

- Implement lifecycle-based retention policies

These strategies help control storage growth and optimize licensing usage.

9. How do you ensure compliance when archiving Quickbase data?

Compliance in Quickbase archiving requires aligning data practices with regulations such as HIPAA, GDPR, SOX, and FINRA. This includes implementing data retention policies, access controls, encryption, audit logging, and legal hold processes. Organizations in regulated industries should also validate external storage providers for compliance certifications.

10. What is the difference between active and historical data in Quickbase?

Active data in Quickbase is frequently accessed and updated for daily operations, while historical data consists of older records retained for audits, reporting, or compliance. Separating these data types improves performance, reduces storage usage, and supports scalable application design.

11. How often should Quickbase data be archived?

Quickbase data should be archived on a regular, automated schedule—such as monthly, quarterly, or based on real-time triggers (e.g., inactivity or process completion). The exact frequency depends on data growth, business needs, and compliance requirements.

12. Can archiving data in Quickbase improve user experience?

Yes. Archiving improves user experience by reducing clutter, speeding up reports and dashboards, and ensuring users interact only with relevant data. Faster, more responsive applications increase user trust and adoption across the organization.

13. What industries benefit most from Quickbase storage optimization?

Industries with high data volume and compliance requirements benefit most, including:

- Healthcare (HIPAA compliance)

- Financial services (FINRA, SEC regulations)

- Construction and project management

- SaaS and technology companies

These organizations rely on scalable, secure data management to maintain performance and meet regulatory standards.

14. What is a Quickbase archiving strategy?

A Quickbase archiving strategy is a structured approach to moving inactive data out of operational tables while preserving access for reporting and compliance. It typically includes automation (Pipelines), external storage, retention policies, and governance controls to ensure long-term scalability and performance.

15. Why is data lifecycle management important in Quickbase?

Data lifecycle management in Quickbase ensures that data is stored, archived, and deleted according to its usage and business value. This improves performance, reduces costs, enhances compliance, and allows organizations to scale their Quickbase applications efficiently.

- Author: Logan Lott

- Title: Solution Consultant | Quickbase

- Email: llott@quandarycg.com

- Date Published: 01/27/2026

Industries

Resources

© 2026 Quandary Consulting Group. All Rights Reserved.

Privacy Policy