Artificial Intelligence (AI)

How to Identify your Company's AI Governance Gap

Board meetings have long been anchored in a consistent set of quantitative performance indicators—revenue, pipeline generation, growth, demand, customer satisfaction, and P&L. These metrics provide a clear, standardized view of organizational health, enabling boards to assess performance, identify risk, and hold leadership accountable.

Over time, governance models have matured around these indicators. Financial performance is not only tracked—it is audited, benchmarked, and continuously monitored through well-defined frameworks.

However, as organizations accelerate investment in Agentic AI, a new and largely unaddressed question is emerging:

Is Agentic AI being governed with the same level of rigor?

Unlike traditional systems, Agentic AI introduces decision-making into the operational fabric of the enterprise. These systems are not simply executing predefined logic—they are interpreting context, making autonomous decisions, and taking action across business functions.

Yet, despite this shift, most organizations:

- Do not have a standardized metric to evaluate AI governance

- Cannot quantify the impact, risk, or alignment of AI systems

- Lack visibility into how AI decisions are made and where they are influencing outcomes

In the boardroom, any domain without a quantifiable, trackable metric represents a structural blind spot. Today, AI governance is one of the largest emerging blind spots in enterprise oversight.

The AI Governance Gap No One Is Addressing

Over the past several years, a consistent pattern has emerged across enterprise environments:

Boards are actively encouraging AI investment and innovation—approving budgets, supporting experimentation, and pushing leadership to “move faster” on AI adoption.

However, in parallel, very few organizations have established a corresponding governance model.

This gap is not due to a lack of awareness—it stems from a fundamental shift in the nature of the technology itself.

Traditional enterprise systems are:

- Deterministic (predictable outputs for given inputs)

- Process-driven (bounded by defined workflows)

- Auditable through logs and controls

By contrast, agentic AI systems are:

- Adaptive (they learn and evolve over time)

- Probabilistic (outputs are not always predictable)

- Autonomous (they can initiate actions without explicit human instruction)

These systems:

- Perceive data across multiple sources

- Interpret context and intent

- Decide on a course of action

- Execute tasks across systems and workflows

Increasingly, they are embedded in mission-critical functions, including:

- Revenue operations (pipeline prioritization, pricing recommendations)

- Customer engagement (automated interactions, personalization engines)

- Financial planning and forecasting

- Talent and workforce decisions

In effect, these systems are becoming participants in decision-making, not just tools.

Why This Creates a Governance Challenge

The introduction of agentic AI fundamentally changes where decisions are made and how accountability is distributed; historically:

- Decisions were made by people, supported by systems

- Accountability was clearly assigned to roles and functions

- Oversight was applied through organizational hierarchies

And now, we are seeing:

- Decisions are increasingly delegated to AI systems

- Accountability becomes diffused or unclear

- Oversight mechanisms are lagging behind system capabilities

This creates several critical risks:

- Invisible decision-making: AI systems influencing outcomes without clear visibility

- Misalignment with strategy: Agents optimizing locally without aligning to enterprise goals

- Unmanaged risk exposure: Actions taken without sufficient controls or escalation paths

- Inconsistent governance: Different AI systems operating under different (or no) standards

Despite these risks, most boards today:

- Receive high-level AI updates, but not governance metrics

- Approve AI investments, but lack tools to assess ongoing oversight

- Rely on qualitative narratives, rather than quantitative accountability

The Core Disconnect

There is a growing imbalance in how organizations govern their business:

- Financial performance is measured with precision, frequency, and accountability

- AI systems, which increasingly influence that performance, are not

This creates a structural gap: Boards are governing outcomes—but not the systems increasingly responsible for producing them. Without a way to measure AI governance maturity, organizations cannot:

- Compare performance over time

- Benchmark against peers

- Identify gaps before they become risks

- Hold leadership accountable for responsible AI deployment

Why This Matters Now

This gap is not theoretical—it is already material. As AI systems:

- Expand in scope

- Increase in autonomy

- Integrate deeper into core operations

The impact of insufficient governance compounds. Organizations that fail to address this will face:

- Increased operational risk

- Greater regulatory exposure

- Reduced trust from customers and stakeholders

- Missed opportunities for strategic advantage

Conversely, organizations that establish measurable AI governance will be better positioned to:

- Scale AI with confidence

- Align AI investments to business value

- Maintain control as systems become more autonomous

Introducing the Agentic Index

The foundational idea is straightforward: "What if AI governance could be measured with the same rigor as financial performance?"

The Agentic Index provides a standardized score (0–100) that quantifies an organization’s AI governance maturity.

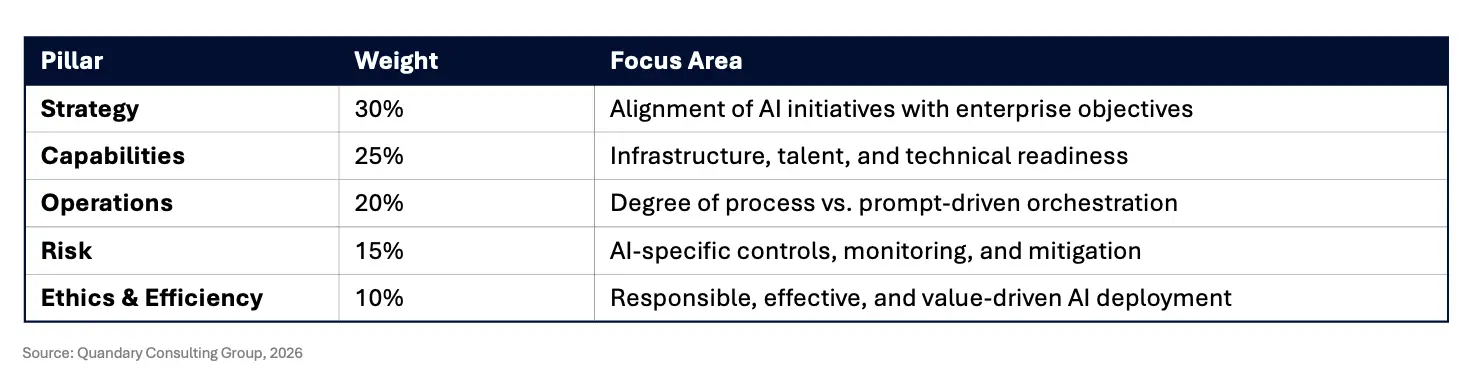

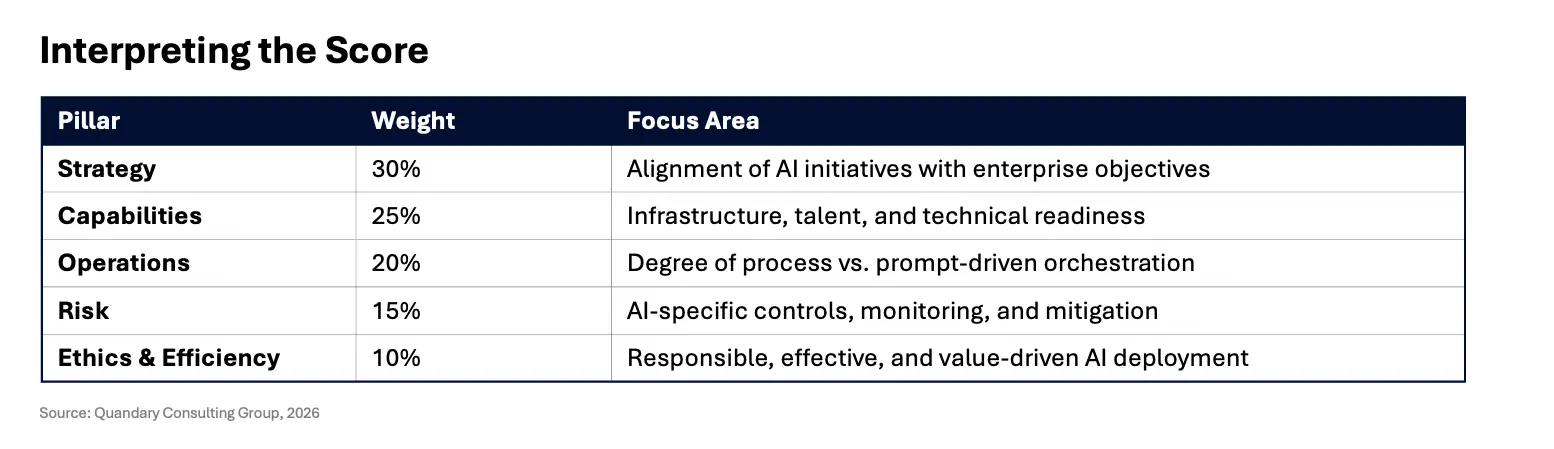

It evaluates performance across five key pillars:

Each pillar is assessed through a structured set of criteria and combined into a weighted composite score.

Most organizations today fall between 25 and 45—indicating active AI deployment without corresponding governance maturity.

To learn more about the Agentic Index, we recommend, "Why Every Board Needs An ‘Agentic Index’ In The World Of Agentic AI", by Bharath Yadla, Forbes Councils Member (March 2026)

Why Strategy Matters Most

The Strategy pillar (30%) carries the greatest weight because it determines whether AI creates enterprise value—or simply adds complexity at scale.

At its core, strategy answers a fundamental question: Why are we deploying AI, and where should it create value?

Without a clearly defined enterprise AI strategy, organizations tend to fall into one of three common traps:

1. Fragmented AI Deployment

Different business units independently deploy AI solutions—often leveraging tools like Copilot, Gemini, or custom LLM applications—without coordination. The result:

- Redundant capabilities across teams

- Inconsistent data usage and governance

- Increased cost with limited enterprise impact

2. Misaligned Optimization

Agentic systems are designed to optimize for specific outcomes—but without strategic alignment, they may optimize for the wrong objectives. For example:

- A revenue-focused AI agent prioritizes short-term conversion at the expense of customer lifetime value

- A cost-efficiency model reduces spend but introduces operational or compliance risk

- A customer service agent improves speed but degrades experience quality or brand perception

In each case, the AI is functioning “correctly”—but strategically misaligned.

3. Technology-Led vs. Business-Led Transformation

Organizations with strong Capabilities (e.g., infrastructure, talent, tooling) often default to a technology-first approach, deploying AI because they can—not because they should. This leads to:

- High activity, low impact

- Innovation without measurable ROI

- Difficulty scaling beyond pilot programs

Strategy as the Control Layer

Strategy is not simply a planning exercise; it serves as the control layer for all AI activity across the enterprise.

A well-defined Strategy pillar ensures that AI initiatives are directly aligned with enterprise priorities and that agentic systems operate within clearly defined business and governance boundaries. It also ensures that critical trade-offs—such as growth versus risk—are explicitly governed by leadership, rather than implicitly determined by autonomous models.

In addition, strategy defines where AI-driven autonomy is appropriate and where human oversight must be retained. It establishes which decisions can be safely delegated to AI systems and which must remain under direct human control. Just as importantly, it provides a clear framework for measuring success, ensuring that AI initiatives are evaluated based on their contribution to enterprise outcomes rather than isolated performance metrics.

The Risk of Getting Strategy Wrong

When strategy is weak or misaligned, strong performance in other areas cannot compensate.

Organizations with high technical capabilities but low strategic alignment often achieve scale without direction, resulting in widespread inefficiencies. Similarly, organizations with strong ethical frameworks but weak strategy may execute responsibly, yet focus on initiatives that fail to deliver meaningful business value. Even highly optimized operations can result in efficient execution of low-impact or misaligned activities.

In effect, AI does not simply scale capability—it scales intent.

If strategy is unclear, AI will amplify that misalignment across the enterprise. This is why Strategy carries the greatest weight within the Agentic Index: it determines whether AI becomes a multiplier of enterprise value or a multiplier of inefficiency and risk.

Enabling a More Effective Board Conversation

The introduction of the Agentic Index fundamentally changes how AI is discussed at the board level.

Today, most AI-related discussions remain largely qualitative, anecdotal, and forward-looking. Leadership teams often describe progress in terms of pilots launched, use cases explored, or future plans for scaling. While informative, these updates lack the objectivity and comparability required for effective governance.

What is missing is a standardized way to measure performance.

From Narrative to Measurement

The Agentic Index shifts the conversation from activity to impact, from experimentation to accountability, and from narrative to measurement.

Rather than relying on general updates, boards can evaluate specific, quantifiable indicators of progress and risk. For example, leadership can report that the organization’s Agentic Index has improved from one quarter to the next, while also identifying which dimensions—such as Strategy, Capability, or Risk—are driving that change.

This introduces a fundamentally different level of clarity and precision into board-level discussions.

What This Enables at the Board Level

The adoption of a standardized AI governance metric enables several critical capabilities.

- First, it allows boards to recognize patterns over time. Directors can assess whether AI governance maturity is improving, stagnating, or regressing, in much the same way they evaluate financial performance.

- Second, it enables target setting and accountability. Leadership teams can be held responsible for improving specific areas of weakness, achieving defined maturity thresholds, and ensuring alignment between AI initiatives and enterprise strategy.

- Third, it leads to more informed and strategic questioning. Instead of asking broad, open-ended questions about AI progress, boards can focus on specific issues, such as why governance maturity in risk is lagging behind technical capability, or whether the organization is scaling AI faster than it is governing it.

- Finally, it supports more effective investment decisions. Boards gain greater clarity on where to increase investment, where to pause or recalibrate, and whether AI initiatives are delivering meaningful enterprise value.

This Is What Governance Looks Like

Effective governance requires more than visibility.

It requires measurement, comparability, and accountability.

The Agentic Index introduces all three, transforming AI from an area of limited oversight into one that can be governed with the same rigor as other critical business functions.

Recommended Actions for Boards

To operationalize AI governance, boards should take a structured and disciplined approach.

- First, they should request a quantifiable measure of AI governance maturity. If leadership is unable to produce a standardized score, this indicates not just a reporting gap, but a fundamental lack of governance infrastructure.

- Second, boards should review pillar-level performance, not just the composite score. While the overall index provides a high-level signal, the underlying dimensions reveal where risks and opportunities exist. A strong overall score can mask significant weaknesses in areas such as risk or operational control.

- Third, boards should establish a regular governance cadence, incorporating AI governance metrics into existing quarterly reporting structures. Over time, this creates consistency, institutional awareness, and continuous improvement.

- Finally, boards should define clear governance thresholds, including minimum acceptable performance levels, target maturity goals, and escalation triggers when those thresholds are not met. This introduces discipline and reinforces accountability across the organization.

The Organizations That Will Lead

As AI systems become more autonomous, embedded, and influential, the competitive landscape will shift accordingly.

Success will not be defined by which organizations deploy the most AI or move the fastest. Instead, it will be defined by which organizations can govern AI most effectively at scale.

The Current Reality

Today, many organizations are scaling AI faster than they are governing it. Investments are often approved based on projected potential rather than measurable performance, and leadership teams frequently lack a clear view of risk exposure or strategic alignment.

As a result, many boards are effectively operating without full visibility into one of the most powerful forces shaping their organizations.

In practical terms, they are flying blind.

What Leadership Will Look Like

Leading organizations will take a fundamentally different approach.

They will treat AI governance as a core board-level responsibility, measure AI performance with the same rigor as financial performance, and establish clear accountability structures for AI systems and outcomes.

They will also ensure that AI initiatives remain continuously aligned with enterprise strategy, rather than evolving independently of it.

The Role of the Agentic Index

The Agentic Index enables this shift by providing a standardized measurement framework, a common language for governance discussions, and a mechanism for accountability and continuous improvement.

This transforms AI from a black box of innovation into a governed, measurable, enterprise capability.

Our Final Thought

Every major transformation in business—from financial reporting to cybersecurity—has required the development of new governance models, frameworks, and metrics.

Agentic AI is no exception.

Organizations that recognize this early—and act decisively—will not only manage risk more effectively, but also unlock greater, more sustainable value from AI.

If you are a board member or executive leader, there are three immediate actions to take:

- Ask for a measurable view of AI governance

- Demand visibility into where AI is making decisions

- Establish a regular cadence for governance review

If these do not exist, that is not a reporting issue—it is a governance gap.

How Quandary Can Help

At Quandary Consulting Group, we help organizations close the AI governance gap and operationalize frameworks like the Agentic Index.

Our approach combines:

- AI strategy and governance design

- Copilot, Gemini, OpenAI, and Anthropic enablement

- Model Context Protocol (MCP) integration for secure, enterprise-grade AI

- Risk, compliance, and data governance frameworks

- End-to-end transformation support—from assessment to deployment and scale

We work directly with executive teams and boards to:

- Establish measurable AI governance models

- Align AI investments to business outcomes (ROI)

- Enable responsible, scalable AI adoption

Connect with our team to start building a governed, enterprise-ready AI strategy